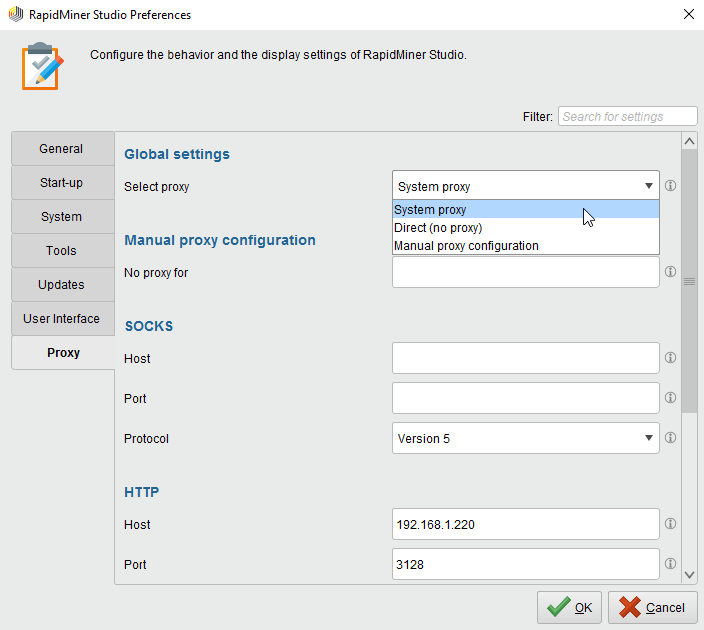

The ranking costs parameter is configured as described above. Labeled data from the Apply Model operator is provided to the Performance (Ranking) operator. The Tree model generated by the Decision Tree operator is applied on the 'Golf-Testset' data set using the Apply Model operator. The Decision Tree operator is applied on it with default values for all parameters. The 'Golf' data set is loaded using the Retrieve operator. Tutorial Processes Applying the Performance (Ranking) operator on the Golf data set ranking costsTable defining the costs when the real label isn't the one with the highest confidence.This concept can be easily understood by studying the attached Example Process. If the input-performance-vector and the calculated-performance-vector both have the same criteria but with different values, the values of calculated-performance-vector are delivered through the output port. If a Performance Vector was also fed at the performance input port (we call it input-performance-vector here), criteria of the input-performance-vector are also added in the output-performance-vector. The output-performance-vector contains performance criteria calculated by this Performance operator (we call it calculated-performance-vector here). The Performance vector is calculated on the basis of the label attribute and the prediction attribute of the input ExampleSet. The Performance Vector is a list of performance criteria values. This port delivers a Performance Vector (we call it output-performance-vector for now). This is usually used to reuse the same ExampleSet in further operators or to view the ExampleSet in the Results Workspace. See the Set Role operator for more details regarding label and prediction roles of attributes.ĮxampleSet that was given as input is passed without change to this output port. Make sure that the ExampleSet has a label attribute and a prediction attribute. The Apply Model operator is a good example of such operators that provide labeled data. This input port expects a labeled ExampleSet. The costs are entered on the right side of the table.įor example, if you want to assign costs of zero if the true label is predicted with the highest confidence, 1 for the second place, 2 for the third and 10 for each following, you have to enter: The counting of rank starts with 0, so the most confident label is rank 0.

Everything before the first mentioned rank will receive costs of 0. These intervals are defined by their start rank and range either until the start of the next interval or infinite. The costs are entered for whole intervals, so you don't have to enter a cost value for each rank.

You can define these costs by the parameter ranking_costs. This operator will sort the confidences for each label and depending on the rank position of the real label, costs are generated. The Performance (Ranking) operator should be used for tasks, where it is not only important that the real class is selected, but also that it receives a comparably high confidence. SynopsisThis operator delivers a performance value representing costs for the confidence rank of the true label.